Greetings everyone!

I had my first predicted disk failure occur on my HPE MSA 2040. As always, it was a breeze contacting HPE support to get the drive replaced (since my unit has a 4 hour response warranty).

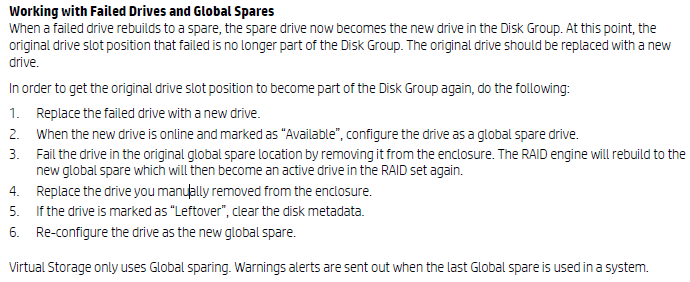

However, with this being my first drive swap I came across something worth mentioning. Typically in RAID arrays when a disk fails, you simply swap out the failed disk and it starts rebuilding, this is NOT the case if you have an HPE MSA 2040 that’s fully loaded with no spares configured.

If you have global spares, the moment the disk is failed, it will automatically rebuild on to available configured spares.

If you don’t have any global spares (my case), the replacement disk is marked as unused and available. You must set this disk as a spare in the SMU for the rebuild to start.

One additional note, if you do have spares and a disk fails, when you replace the disk that failed it will not automatically rebuild that disk back from the spare. You must force fail (pull out) the spare disk for it to start rebuilding on the freshly replaced disk. Always confirm current redundancy levels and activity before forcefully failing any disks!

As per HPE’s MSA 1040/2040 Best Practices document:

Source: https://h50146.www5.hpe.com/products/storage/whitepaper/pdfs/4AA4-6892ENW.pdf

Source: https://h50146.www5.hpe.com/products/storage/whitepaper/pdfs/4AA4-6892ENW.pdf

Thanks for the quick Response!

The only option I see in regarding global spare is selecting ” Change Disk Group Spare” and I am unable to click on anything within that option box. Are there any specific steps or options with the GUI I need to access/follow?

Scott, I see you’re online. I’m going to send you a message on chat.

Got the new drive in and swapped it out and automatically went into rebuild mode. I think I got pretty much figured out now. Weird the other drive when straight into archive mode and was solid green. Must of been a used drive from before.

I know this is an old thread but figured ‘d leave a question.

Had a drive go bad in slot 9. we “believe” that we had 2 Global spares but are not entirely positive. We believe the Global Spares were on disk 2 and 11 and when disk 9 went bad that the Global spare in slot 2 took over. When I replaced the drive in slot 9 this morning I’m pretty sure it said Available but now it is showing as a Vdisk spare? I can’t find a way to make it a global spare.

600GB SAS drives in a Raid 10 array 8 Drive are in Volume, 2 in another with what we thought were 2 Global spares Exchange environment so don’t want to blow away anything.

Does what I said makes sense or not?

Thanks

Hi Jeff,

You should look at your config and check your spare configuration. You’ll also be able to use this method to add a new disk as a global spare.

Log in to the SMU, click on “System” on the left. Now click on “Action”, and select “Change Global Spares”. This will show you disks you can add as a Global Spare, and also show you configured Spares.

Since you’re not familiar with the config of your unit, I’d recommend checking the health to make sure none of the RAID arrays or disk groups are running in a degraded state.

Additionally, you can find out what disks are members of a disk group and pool by going in the SMU to “Pools” and viewing the different pools and disk groups.

Hope this helps.

Stephen

I had a drive give an intermittent code 58 so I decided to replace it. Thanks for the heads up on having to pull the spare after replacing the original bad drive. Saved me from a lot of head scratching!

Armando

If you have a drive that has an warning of a failure, can you force disconnect that in the configuration software to have the spare build itself as the main, and then hotswap the new drive in the former main drive slot, making the former spare as a main drive?

Hi PaulD,

That is the default behavior when a drive fails.

I have a similar but slightly different issue. My boss removed one active disk to look at the model number, part number, etc. so we can order more and expand our storage. Once he reinserted the drive, the controller detected it, but it sees that it was previously part of a volume group and won’t allow me to add it back to the same group. I also cannot add it as a spare, and I surmise that I will need to wipe the disk so that I can add it back as a spare to rebuild into the original volume group. The problem is, I don’t know how to do that in the storage manager system. I don’t see any options where I can just wipe the disk.

Side note: I had tried to suggest that we connect to the SAN Manager before removing the drive to get the drive particulars, but that didn’t happen.

HI Nelson,

That is correct. Disks that are used store the Metadata of the array so that if other disks fail, the configuration is stored. This protects disks.

I could be wrong, but I think there is somewhere to wipe/repurpose the drive in the SMU. If not you’ll need to wipe it before using it.

Cheers,

Stephen

Dear Stephen,

As per your mentioned procedure, we need to fail the previous global spare so that the new replaced hard disk rebuilds into its original disk group.

My question is, what would be the issue if we keep the newly replaced hard disk for global spare and previous global spare hard disk which is now part of disk group as it is.

Hi Anwar, that would be fine to leave it.

Cheers

Goodmorning,

Is there a way to manually fail a drive, something like “right-click -> fail drive” from the SMU or does one always have to pull a drive to force it to go to the global hotspare?

We have an end customer that is nervous about a message in the logfile (impending or predicted drive failure).

Thanks!

Regards

Remco